C.5: Future proposals

Pre-screening or pre-filtering

86. In March 2018, the NCA gave evidence before the Home Affairs Select Committee Inquiry into ‘Policing for the Future’. The NCA set out “three asks that were made of industry”.[1] The first of those requests related to pre-screening or pre-filtering of known and unknown imagery to prevent indecent images offences occurring in the first place.

87. In relation to known imagery, Mr Jones said:

“you can stop an offender from accessing a known image because it’s been hashed, it’s detectable, it’s an illegal commodity which is moving digitally. So if you prevent access to that, you prevent an offence. It’s as simple as that.”[2]

88. In November 2019, the NCA stated that it was still possible to access known child sexual abuse imagery on “mainstream” search engines within just “three clicks”.[3]

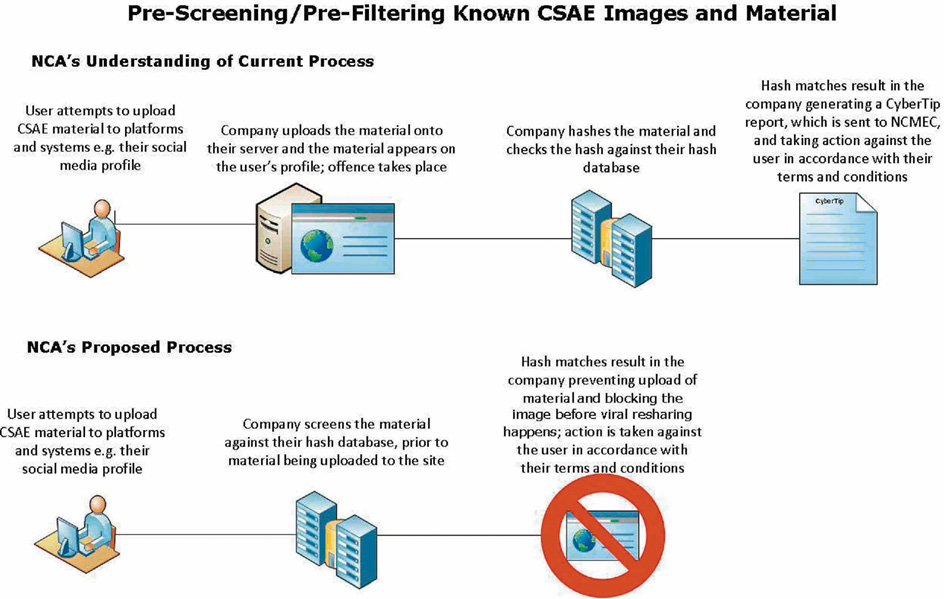

89. The essence of the NCA’s proposal is for an internet company to scan the image against their hash database prior to the image being uploaded. If the image is identified as a known indecent image, it can then be prevented from being uploaded. The graphic below sets out the current screening process and the proposed process when pre-screening or pre-filtering is used:

Long Description

Pre-screening/Pre-filtering known CSAE images and material

NCA Understanding of current process

- User attempts to upload CSAE material to platforms and systems e.g. their social media profile

- Company uploads the material onto their server and the material appears on the user's profile; offence takes place

- Company hashes the material and checks the hash against their hash database

- Hash maches result in the company generating a CyberTip report, which is sent to the NCMEC, and taking action against the user in accordance with their terms and conditions

NCA's proposed process

- User attempts to upload CSAE material to platforms and systems e.g. thier social media profile

- Company screens the material against their hash database, prior to material being uploaded to the site

- Hash matches result in the company preventing upload of material and blocking the image before viral resharing happens; action is taken against the user in accordance with their terms and conditions

Current indecent image screening process and NCA’s proposed process

Source: NCA000366

90. Mr Jones explained that the introduction of 5G will enable quicker upload and download speeds with a consequential increase in the speed at which indecent imagery can be shared. The NCA considers that if pre-screening or pre-filtering is used by companies to prevent access to the imagery at the outset, it will allow law enforcement the “capacity and capability to chase first-generation images and safeguard children as quickly as possible”.[4] The internet companies could then use their classifier technology to identify previously unknown child sexual abuse material and first-generation images. These images would be hashed and incorporated into the NCMEC database thereby expanding the pool of images that could be prevented from being accessed.

91. Google agreed that pre-filtering was a “proactive” approach that “prevents the offending material from being disseminated”[5] but said that the image needed to be uploaded (or found by a Google search on another website) in order for their image classifiers to be used.[6] Ms Canegallo stated that Google “has not come to a conclusion on [the] feasibility or efficacy” but she thought that pre-filtering “presents serious technological and security challenges”.[7]

92. Ms de Bailliencourt was aware of the NCA’s request for pre-screening and was asked “What steps, if any, are Facebook taking to prevent the image being uploaded at the outset?” She replied:

“we didn’t develop PhotoDNA … Microsoft developed the technology, so they may be better placed to provide additional insights here. I know the way it is working on the platform would generally move so quickly that it’s really a matter of seconds before its removal.” [8]

Ms de Bailliencourt’s answer was that, given the obligation to report any child sexual abuse material to NCMEC and the potential for an individual to be arrested, Facebook “need to make sure that we have reasonable conclusion that the content was uploaded and is indeed matching any of the hashes that we have”.[9] As a result, we remain unsure about Facebook’s position in relation to pre-screening indecent images of children.

93. Apple considered that filtering known child sexual abuse material images was “effective”.[10]

94. Microsoft explained that it screens for known indecent images of children at the point at which the image is shared and that “applying PhotoDNA at that point is actually very fast”.[11] Mr Milward explained that Microsoft:

“feel that the invasion of privacy around routinely screening people’s private files and folders would not be accepted by the general public as being an appropriate level of intrusion by a technology company”.[12]

95. No industry witness said that it was technologically impossible to pre-screen their platforms and services. PhotoDNA is efficient in detecting a known indecent image once it has been uploaded but it is important to try and prevent the image being uploaded in the first place and thereby prevent access. The use of pre-screening or pre-filtering should be encouraged in order to fulfil the government’s expectation that “child sexual abuse material should be blocked as soon as companies detect it being uploaded”.[13] This is a key aspect of the preventative approach that is necessary.

Self-generated imagery

96. The ease and frequency with which children can share self-generated indecent imagery is all too apparent.

96.1. The government’s Online Harms White Paper (published in April 2019)[14] refers to surveys that indicate between 26 percent and 38 percent of 14 to 17-year-olds have sent sexual images to a partner and between 12 percent and 49 percent have received a sexual image.

96.2. The IWF states that self-generated imagery now makes up one-third of the child sexual abuse material that it removes from the internet. Of that one-third, 82 percent of the imagery features 11 to 13-year-olds, with the overwhelming majority featuring images of girls.[15]

96.3. In Greater Manchester, children are recorded as the offender in nearly half of all indecent images of children offences.[16] In Cumbria, “in the last three financial years, children make up the largest group of suspects recorded” for indecent images of children offences.[17]

96.4. The Learning about online sexual harm research report stated that “The issue of sexual images received considerable attention among interview and focus group participants”.[18] The children told the researchers about how they and/or their peers received unsolicited explicit messages (primarily sent by males to females) and requests to send someone nude images. As one 14-year-old interviewee said:

“I don’t think my dad realises how many messages from random boys I get or how many dick pics I get. And I have to deal with it every day … it’s kind of like a normal thing for girls now … I’ve been in conversations [online] like, ‘Hi. Hi. Nudes?’ I’m like, ‘No’ … yeah, it literally happens that quickly. Like, ‘What’s your age?’ And you’ll say how old you are, you’re underage, and they’ll be like, ‘Oh OK’, and then they’ll ask for pictures.”[19]

97. The Protection of Children Act 1978 criminalises the making, taking or distribution of an indecent image of a child irrespective of the circumstances in which the image is taken. Where, for example, sexual images are shared between two 16-year-olds who are, legally, sexually active, both are committing a criminal offence and could be prosecuted.

98. Chief Constable Bailey explained that, in conjunction with the Home Office, ‘Outcome 21’ was devised in response to the concern that:

“children were becoming criminalised, and as a result their life chances were then going to be significantly undermined because the Disclosure and Barring Service would then disclose if they wanted to become a police officer or a nurse or a social worker”.[20]

Outcome 21 enables police to record that a crime has been committed but the child is not prosecuted on the basis it is not in the public interest to do so.[21] Outcome 21 is only used where there are no aggravating factors, such as where the sharing of the image is not as a result of blackmail or extortion. Outcome 21 is therefore a sensible response to a very real problem.

99. The Inquiry heard about a joint NCA, IWF, National Society for the Prevention of Cruelty to Children (NSPCC), NCMEC and Home Office initiative called ‘Report Remove’. The aim of Report Remove is to enable a child to report a self-generated image and request that the image be taken down. As Mr Jones said:

“we’ve … come up with a viable system that will allow us to quarantine the image, prevent the image from being shared amongst sex offenders, safeguard the child, who may need help and advice, and not criminalise them”.[22]

In reporting the image, the child will not be directed to law enforcement. The procedure is being designed to ensure that once the image is hashed it is flagged as a ‘Report Remove’ image. This will ensure that NCMEC and, subsequently, the NCA know that this is an image that has come from this initiative where the victim’s identity is known.

Age verification

100. The Inquiry heard evidence that child sexual abuse material relating to older children is often found in public forums on the internet, including on adult pornography websites. Professor Warren Binford, a trustee of Child Redress International (CRI),[23] gave an example whereby 60 variations of an image of a pubescent victim were posted to 538,729 unique URLs and 99 per cent of those URLs were found on 14 adult sites.[24]

101. Chief Constable Bailey told us that “the greatest percentage of people now viewing online is not, as I think an awful lot of people would perceive it to be, in the 40s and 50s, it’s that age group of 18 to 24”.[25] He added that the availability of pornography is:

“creating a group of men who will look at pornography and the pornography gets harder and harder and harder, to the point where they are simply getting no sexual stimulation from it at all, so the next click is child abuse imagery. This is a real problem. It really worries me that children who should not be being able to access that material … are being led to believe this is what a normal relationship looks like and this is normal activity.”[26]

102. The NCA gave the example of Tashan Gallagher, who in March 2019 was sentenced to 15 years’ imprisonment for child sexual abuse offences, having:

“viewed images for probably two and a half years. By the time we captured that individual, he had progressed through a journey which had taken him through a series of forums who had told him his behaviour was normal, they had rationalised his behaviour, he had become desensitised and he encountered the dark web. When he tried to get into the dark web ... They wouldn’t let him into that forum unless he produced new, first-generation images.”[27]

To gain access to the forum, Gallagher recorded himself raping a six-month-old baby girl and sexually assaulting a two-year-old boy.

103. Mr Jones explained that a number of perpetrators recently arrested by the NCA “aren’t people who would be seen as the stereotypical person that poses a threat to a child”.[28] These were people who had grown up in the internet age. They had initially viewed images online but had gone on to engage in contact child sexual abuse. Mr Jones said there was a “very low barrier to entry for offenders who seek access to child abuse images” and that these individuals had crossed it.[29]

104. The Inquiry’s ‘Learning about online harm’ research considered that children’s “repeated exposure” to being sent sexual images and/or requests for them “could lead to desensitisation, which meant such incidents became accepted as an everyday part of life rather than something harmful to be acted on”.[30]

105. In 2016, the government proposed introducing legislation, the Digital Economy Act 2017 (DEA), that restricted access to pornographic websites to those aged 18 or over. In October 2019, the government announced that it would not be implementing the part of the DEA concerning age verification controls designed to ensure that those aged under 18 cannot access those sites. The government said that the reason for this decision was to ensure that “our policy aims and our overall policy on protecting children from online harms are developed coherently” and “that this objective of coherence will be best achieved through our wider online harms proposals”.[31]

106. Chief Constable Bailey considered that the DEA was “really an important element”[32] in preventing children from becoming desensitised by viewing adult pornography and potentially seeking out indecent images of children. This echoes comments made by children who participated in the ‘Learning about online sexual harm’ research who identified exposure to pornography as being one of a number of examples of online sexual harm.[33] Legislation is required in order to ensure that children are protected from harmful sexualised content online, and this part of the DEA was an important measure designed to prevent children viewing adult sexual material. The value of this part of the legislation was, and remains, obvious – it may prevent some children being exposed to child sexual abuse material. Delaying or deferring action until the Online Harms legislation comes into force fails to recognise the urgency of the problem.